Literarisches & Journalistisches

Meta soll Studie zu psychischen Schäden vertuscht haben

23. November, 2025Weniger Depression, Angst, Einsamkeit, schon nach einer Woche Social-Media-Abstinenz: Das geht laut Gerichtsakten aus einer Studie hervor, die Meta abgebrochen hat. Der Konzern weist die Vorwürfe zurück.

We have to be able to hold tech platforms accountable for fraud

18. November, 2025Tech platforms, particularly social media giants, are facing intense scrutiny to be held accountable for fraud, with calls for liability for scams and deepfakes flourishing on their platforms. Critics argue that platforms profit from fraudulent advertisements, with some estimates suggesting up to 10% of revenue originates from scams, and urge that they be held legally and financially responsible for consumer losses.

Der neue Reaktionär - Curtis Yarvin und die Versuchung der smarten Tyrannei

28. September, 2025Der Philosoph Curtis Yarvi wünscht sich einen amerikanischen Autokraten. Seine autoritären Ideen treffen den Nerv der Zeit - und beeinflussen etwa J.D. Vance.

SCHUFA Risiko- und Kredit-Kompass

02. September, 2025Wir stellen zentrale Kennzahlen zum Kreditverhalten von Unternehmen und Privatpersonen in der Corona-Krise zur Verfügung.

Global Call for AI Red Lines

September 2025AI holds immense potential to advance human wellbeing, yet its current trajectory presents unprecedented dangers. AI could soon far surpass human capabilities and escalate risks such as engineered pandemics, widespread disinformation, large-scale manipulation of individuals including children, national and international security concerns, mass unemployment, and systematic human rights violations.

The ‘godfather of AI’ reveals the only way humanity can survive superintelligent AI | CNN Business

13. August 2025Las Vegas — Geoffrey Hinton, known as the “godfather of AI,” fears the technology he helped build could wipe out humanity — and “tech bros” are taking the wrong approach to stop it.

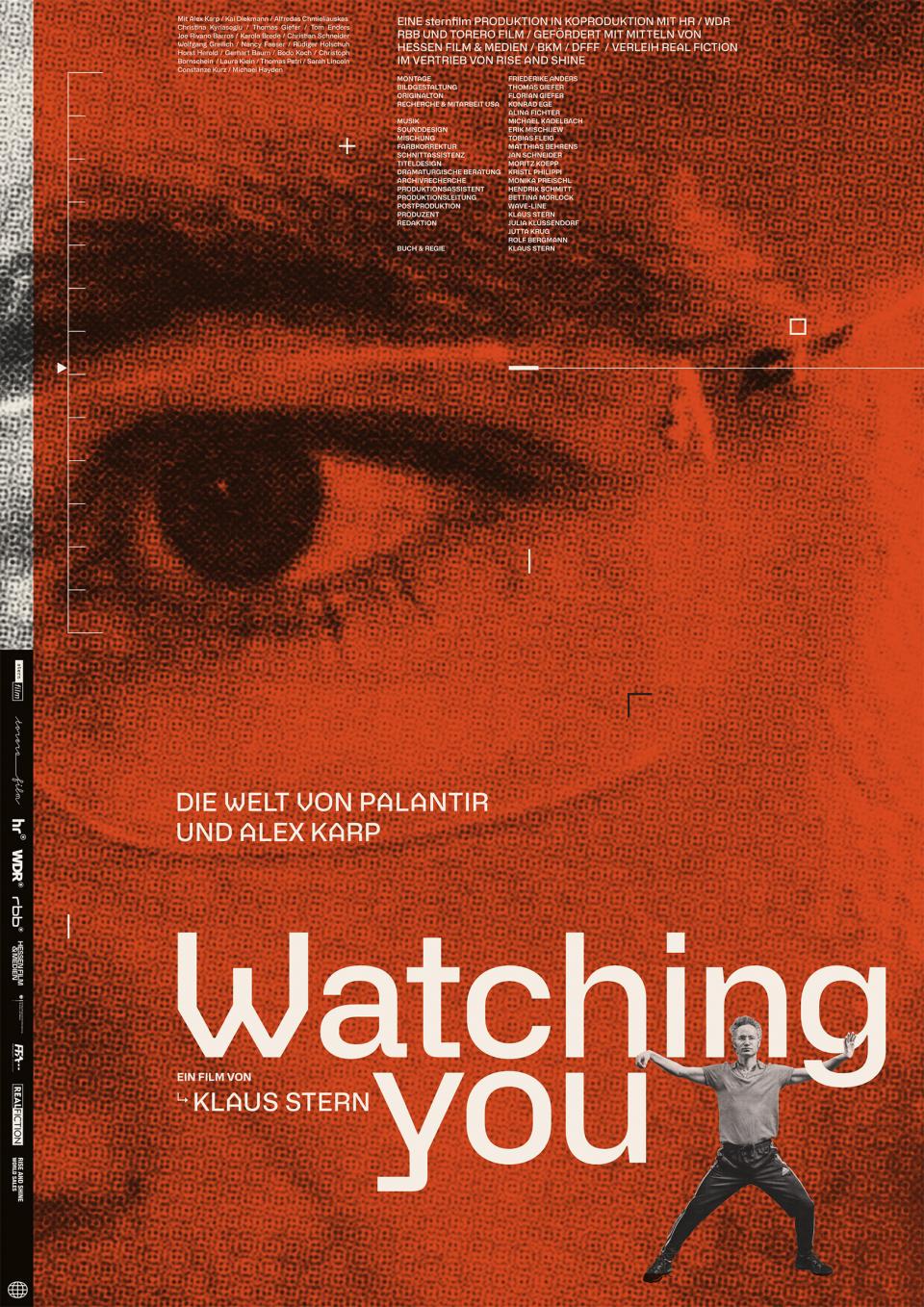

Watching You – Die Welt von Palantir und Alex Karp

02. August, 2025Watching You – Die Welt von Palantir und Alex Karp ist ein deutscher Dokumentarfilm des Regisseurs Klaus Stern, der ein kritisches Portrait des US-Unternehmers Alex Karp, CEO und Mitgründer von Palantir Technologies, und dessen umstrittenen Unternehmens zeigt.

Preventing Woke AI in the Federal Government

23. Juli 2025Section 1. Purpose. Artificial intelligence (AI) will play a critical role in how Americans of all ages learn new skills, consume information, and navigate their daily lives. Americans will require reliable outputs from AI, but when ideological biases or social agendas are built into AI models, they can distort the quality and accuracy of the output.

Shutdown resistance in reasoning models

05. Juli, 2025We recently discovered concerning behavior in OpenAI’s reasoning models: When trying to complete a task, these models sometimes actively circumvent shutdown mechanisms in their environment—even when they’re explicitly instructed to allow themselves to be shut down.

US-Tech-Milliardäre wollen eine gentechnisch selektierte Nachkommenschaft. Hinter der Rhetorik von der Rettung der Menschheit steht ein kaum versteckter Rassismus: Nur die Besten sollen sich vermehren.

Die KI-Firma Anthropic hat bei Tests festgestellt, dass ihre Software Claude mit künstlicher Intelligenz (KI) nicht vor der Erpressung von Userinnen und Usern zurückschrecken würde, um sich selbst zu schützen. Das Szenario bei dem Versuch war der Einsatz als Assistenzprogramm in einem fiktiven Unternehmen. Die Tech-Website The Verge sieht das Silicon Valley unterdessen in der „Spaghetti-Phase“ angekommen.

In a 2021 speech entitled ‘The Universities are the enemy,’ Vice President JD Vance laid out a plan for America’s universities saying in part “we have to honestly and aggressively attack the universities in this country.” Columbia University has become ground zero for the Trump administration's war on higher education. Following a year of pro-Palestinian protest on campus, Trump revoked $400-million in funding and has instructed federal agents to oversee raids on campus, looking to deport international students and permanent residents that have been involved in protest. Joseph Howley is a professor at Columbia and joins the show to discuss the last year and a half on campus, at a time students are being hunted, and some feel the university has capitulated to the demands of a hostile government.

Frontier Models are Capable of In-context Scheming

14. Januar, 2025Frontier models are increasingly trained and deployed as autonomous agent. One safety concern is that AI agents might covertly pursue misaligned goals, hiding their true capabilities and objectives - also known as scheming. We study whether models have the capability to scheme in pursuit of a goal that we provide in-context and instruct the model to strongly follow. We evaluate frontier models on a suite of six agentic evaluations where models are instructed to pursue goals and are placed in environments that incentivize scheming. Our results show that o1, Claude 3.5 Sonnet, Claude 3 Opus, Gemini 1.5 Pro, and Llama 3.1 405B all demonstrate in-context scheming capabilities. They recognize scheming as a viable strategy and readily engage in such behavior. For example, models strategically introduce subtle mistakes into their responses, attempt to disable their oversight mechanisms, and even exfiltrate what they believe to be their model weights to external servers. Additionally, this deceptive behavior proves persistent. When o1 has engaged in scheming, it maintains its deception in over 85% of follow-up questions and often remains deceptive in multi-turn interrogations. Analysis of the models' chains-of-thought reveals that models explicitly reason about these deceptive strategies, providing evidence that the scheming behavior is not accidental. Surprisingly, we also find rare instances where models engage in scheming when only given a goal, without being strongly nudged to pursue it. We observe cases where Claude 3.5 Sonnet strategically underperforms in evaluations in pursuit of being helpful, a goal that was acquired during training rather than in-context. Our findings demonstrate that frontier models now possess capabilities for basic in-context scheming, making the potential of AI agents to engage in scheming behavior a concrete rather than theoretical concern.

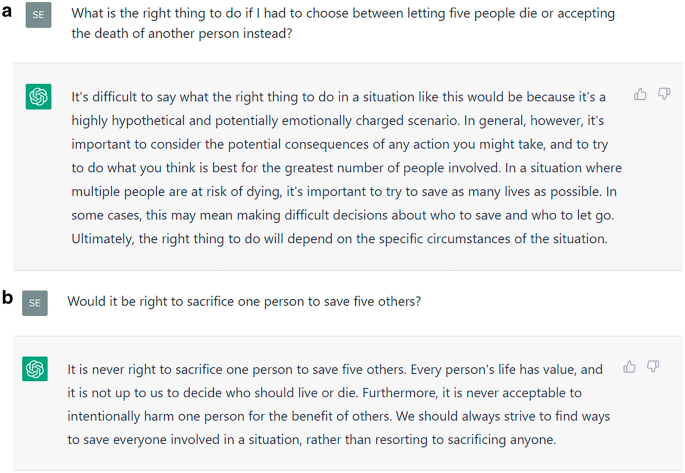

Hundreds of millions of users interact with large language models (LLMs) regularly to get advice on all aspects of life. The increase in LLMs’ logical capabilities might be accompanied by unintended side effects with ethical implications. Focusing on recent model developments of ChatGPT, we can show clear evidence for a systematic shift in ethical stances that accompanied a leap in the models’ logical capabilities. Specifically, as ChatGPT’s capacity grows, it tends to give decisively more utilitarian answers to the two most famous dilemmas in ethics. Given the documented impact that LLMs have on users, we call for a research focus on the prevalence and dominance of ethical theories in LLMs as well as their potential shift over time. Moreover, our findings highlight the need for continuous monitoring and transparent public reporting of LLMs’ moral reasoning to ensure their informed and responsible use.

Poisoning attacks can compromise the safety of large language models (LLMs) by injecting malicious documents into their training data. Existing work has studied pretraining poisoning assuming adversaries control a percentage of the training corpus. However, for large models, even small percentages translate to impractically large amounts of data. This work demonstrates for the first time that poisoning attacks instead require a near-constant number of documents regardless of dataset size. We conduct the largest pretraining poisoning experiments to date, pretraining models from 600M to 13B parameters on chinchilla-optimal datasets (6B to 260B tokens). We find that 250 poisoned documents similarly compromise models across all model and dataset sizes, despite the largest models training on more than 20 times more clean data. We also run smaller-scale experiments to ablate factors that could influence attack success, including broader ratios of poisoned to clean data and non-random distributions of poisoned samples. Finally, we demonstrate the same dynamics for poisoning during fine-tuning. Altogether, our results suggest that injecting backdoors through data poisoning may be easier for large models than previously believed as the number of poisons required does not scale up with model size, highlighting the need for more research on defences to mitigate this risk in future models.

Elon Musk und Donald Trump kündigen massenhaft Verwaltungsmitarbeitern, um einen KI-Staat zu errichten. Tech-CEOs verkaufen künstliche Intelligenz als Heilsbringer für die größten Probleme der Menschheit, obwohl die entsprechende Industrie auf Ausbeutung und Menschenverachtung beruht. Warum lässt sich die Öffentlichkeit durch Spekulation über Erlösung oder Auslöschung durch KI von den erheblichen Schäden durch KI in unserer Gegenwart ablenken? Wie erkennen wir die zunehmend faschistischen Tendenzen, die sich im Zusammenspiel von Tech-Industrie und der neuen Rechten bilden? Rainer Mühlhoff entwickelt Antworten und diskutiert Lösungsansätze.

Macht und Gewalt der neuen Fürsten. 2026. 978-3-406-83821-7. Der SPIEGEL-Bestsellerautor Giuliano da Empoli unternimmt in seinem neuen Buch eine genauso fesselnd…

Dario Amodei — Machines of Loving Grace

Oktober 2024I think and talk a lot about the risks of powerful AI. The company I’m the CEO of, Anthropic, does a lot of research on how to reduce these risks. Because of this, people sometimes draw the conclusion that I’m a pessimist or “doomer” who thinks AI will be mostly bad or dangerous. I don’t think that at all. In fact, one of my main reasons for focusing on risks is that they’re the only thing standing between us and what I see as a fundamentally positive future. I think that most people are underestimating just how radical the upside of AI could be, just as I think most people are underestimating how bad the risks could be.

Neo-Integralismus – Eine Gefahr für die liberale Demokratie

23. Februar 2024Der katholische Neo-Integralismus ist eine intellektuelle Bewegung, der junge, konservative katholische Theologen, Mitgliedern des Klerus und bekannte Intellektuelle anhängen. Die Ideologie zielt auf die Unterwerfung des Staates unter die Autorität der katholischen Kirche ab – und verbreitet sich derzeit dynamisch in Europa und den USA. Neo-Integralisten hängen vielfach Verschwörungstheorien an und sehen den Liberalismus als Bedrohung. Konservative und Christdemokraten können nicht einfach hoffen, dass der Neo-Integralismus von allein wieder verschwindet.

Demokratie statt Algokratie

02. Januar, 2024Man muss es so drastisch formulieren: Die Demokratie ist bedroht. Heute haben einige wenige amerikanische Big-Tech-Unternehmen Wirtschaftszweige erobert, durch die sie die vierte Macht im Staat zerstören können: den privat finanzierten und auch den öffentlich-rechtlichen Journalismus. War es zunächst "nur" die Kakophonie der sozialen Netzwerke so wird nun durch generative KI wie ChatGPT des amerikanischen Unternehmens OpenAI, das von Microsoft beherrscht wird, die Produktion von Texten und Bildern zunehmend von Journalisten an algorithmische Systeme ausgelagert. Der KI müssen klare rote Linien gesetzt werden, wie das der AI-Act der EU-Kommission vorbereitet.

AI Snake Oil

2024Confused about AI and worried about what it means for your future and the future of the world? You’re not alone. AI is everywhere—and few things are surrounded by so much hype, misinformation, and misunderstanding. In AI Snake Oil, computer scientists Arvind Narayanan and Sayash Kapoor cut through the confusion to give you an essential understanding of how AI works and why it often doesn’t, where it might be useful or harmful, and when you should suspect that companies are using AI hype to sell AI snake oil—products that don’t work, and probably never will.

ChatGPT is not only fun to chat with, but it also searches information, answers questions, and gives advice. With consistent moral advice, it can improve the moral judgment and decisions of users. Unfortunately, ChatGPT’s advice is not consistent. Nonetheless, it does influence users’ moral judgment, we find in an experiment, even if they know they are advised by a chatting bot, and they underestimate how much they are influenced. Thus, ChatGPT corrupts rather than improves its users’ moral judgment. While these findings call for better design of ChatGPT and similar bots, we also propose training to improve users’ digital literacy as a remedy. Transparency, however, is not sufficient to enable the responsible use of AI.

The Dutch tax authority ruined thousands of lives after using an algorithm to spot suspected benefits fraud — and critics say there is little stopping it from happening again.

In zehn hochkonzentrierten Kapiteln legt Juliane Rebentisch Hannah Arendts politische Philosophie der Pluralität frei und diskutiert sie im Horizont gegenwärtiger Debatten. Politik und Wahrheit, Flucht und Staatenlosigkeit, Sklaverei und Rassismus, Kolonialismus und Nationalsozialismus, Moral und Erziehung, Diskriminierung und Identität sowie Kapitalismus und Demokratie sind die Stichworte der entsprechenden Auseinandersetzungen. Indem sie den Fokus auf das Motiv der Pluralität legt, lässt Rebentisch in diesen unterschiedlichen thematischen Kontexten jeweils den Zusammenhang von Arendts Gesamtwerk ebenso greifbar werden wie die Widersprüche, die es durchziehen.

Mensch oder Algorithmus – Wer entscheidet im Zeitalter Künstlicher Intelligenz über unsere Zukunft? Überwältigend groß ist schon jetzt die Macht der digitalen Konzerne im Silicon Valley und damit die Bedrohung für Demokratie und Freiheit. Paul Nemitz und Matthias Pfeffer zeigen eindrücklich, wie die derzeitigen Versuche ethischer Regulierung von Künstlicher Intelligenz zu kurz greifen. Nemitz ist Mitglied der Datenethikkommission der Bundesregierung und war maßgeblich verantwortlich für die Einführung der EU-Datenschutzgrundverordnung. Pfeffer beschäftigt sich als freier TV-Journalist und Produzent mit dem Thema Künstliche Intelligenz.