Literarisches & Journalistisches

Nicolas Guillou, French ICC judge sanctioned by the US: 'You are effectively blacklisted by much of the world's banking system'

19. November, 2025Six judges and three prosecutors at the International Criminal Court have been sanctioned by the Trump administration. In an interview with Le Monde, Guillou discusses the impact of these measures on his work and daily life.

We have to be able to hold tech platforms accountable for fraud

18. November, 2025Tech platforms, particularly social media giants, are facing intense scrutiny to be held accountable for fraud, with calls for liability for scams and deepfakes flourishing on their platforms. Critics argue that platforms profit from fraudulent advertisements, with some estimates suggesting up to 10% of revenue originates from scams, and urge that they be held legally and financially responsible for consumer losses.

AI Factories

30. Oktober, 2025AI Factories leverage the supercomputing capacity of the EuroHPC Joint Undertaking to develop trustworthy cutting-edge generative AI models.

New figures show that the digital industry as a whole is now spending €151 million a year on lobbying the EU, a major increase to what was already considerable firepower.

AI: Five charts that put data-centre energy use – and emissions – into context - Carbon Brief

15. September 2025Many have warned that the rapid expansion of data centres could slow down or even reverse the global shift towards net-zero.

AI Tools in Society: Impacts on Cognitive Offloading and the Future of Critical Thinking.

10. September, 2025The proliferation of artificial intelligence (AI) tools has transformed numerous aspects of daily life, yet its impact on critical thinking remains underexplored. This study investigates the relationship between AI tool usage and critical thinking skills, focusing on cognitive offloading as a mediating factor. Utilising a mixed-method approach, we conducted surveys and in-depth interviews with 666 participants across diverse age groups and educational backgrounds. Quantitative data were analysed using ANOVA and correlation analysis, while qualitative insights were obtained through thematic analysis of interview transcripts. The findings revealed a significant negative correlation between frequent AI tool usage and critical thinking abilities, mediated by increased cognitive offloading. Younger participants exhibited higher dependence on AI tools and lower critical thinking scores compared to older participants. Furthermore, higher educational attainment was associated with better critical thinking skills, regardless of AI usage. These results highlight the potential cognitive costs of AI tool reliance, emphasising the need for educational strategies that promote critical engagement with AI technologies. This study contributes to the growing discourse on AI's cognitive implications, offering practical recommendations for mitigating its adverse effects on critical thinking. The findings underscore the importance of fostering critical thinking in an AI-driven world, making this research essential reading for educators, policymakers, and technologists.

Global Call for AI Red Lines

September 2025AI holds immense potential to advance human wellbeing, yet its current trajectory presents unprecedented dangers. AI could soon far surpass human capabilities and escalate risks such as engineered pandemics, widespread disinformation, large-scale manipulation of individuals including children, national and international security concerns, mass unemployment, and systematic human rights violations.

The General Assembly, Recalling its resolution 79/1 of 22 September 2024, entitled “The Pact for the Future”, including the annex thereto entitled “Global Digital Compact”,1 in which the General Assembly decided to establish a multidisciplinary Independent International Scientific Panel on Artificial Intelligence and initiate a Global Dialogue on Artificial Intelligence Governance, bearing in mind that the present resolution, as well as the activities of the Panel and the Dialogue are limited to the non-military domain and do not refer to artificial intelligence for military purposes

The ‘godfather of AI’ reveals the only way humanity can survive superintelligent AI | CNN Business

13. August 2025Las Vegas — Geoffrey Hinton, known as the “godfather of AI,” fears the technology he helped build could wipe out humanity — and “tech bros” are taking the wrong approach to stop it.

Preventing Woke AI in the Federal Government

23. Juli 2025Section 1. Purpose. Artificial intelligence (AI) will play a critical role in how Americans of all ages learn new skills, consume information, and navigate their daily lives. Americans will require reliable outputs from AI, but when ideological biases or social agendas are built into AI models, they can distort the quality and accuracy of the output.

Europe to launch Eurosky to regain digital control - CADE – Civil Society Alliances for Digital Empowerment

17. Juli, 2025Europe launches Eurosky to reduce tech reliance on the U.S. and boost digital sovereignty. Europe is taking steps to assert its digital independence by launching the Eurosky initiative, a government-backed project to reduce reliance on US tech giants. Eurosky seeks to build European infrastructure for social media platforms and promote digital sovereignty. The goal is to ensure that the continent’s digital space is governed by European laws, values, and rules, rather than being subject to the influence of foreign companies or governments.

Shutdown resistance in reasoning models

05. Juli, 2025We recently discovered concerning behavior in OpenAI’s reasoning models: When trying to complete a task, these models sometimes actively circumvent shutdown mechanisms in their environment—even when they’re explicitly instructed to allow themselves to be shut down.

In a 2021 speech entitled ‘The Universities are the enemy,’ Vice President JD Vance laid out a plan for America’s universities saying in part “we have to honestly and aggressively attack the universities in this country.” Columbia University has become ground zero for the Trump administration's war on higher education. Following a year of pro-Palestinian protest on campus, Trump revoked $400-million in funding and has instructed federal agents to oversee raids on campus, looking to deport international students and permanent residents that have been involved in protest. Joseph Howley is a professor at Columbia and joins the show to discuss the last year and a half on campus, at a time students are being hunted, and some feel the university has capitulated to the demands of a hostile government.

Frontier Models are Capable of In-context Scheming

14. Januar, 2025Frontier models are increasingly trained and deployed as autonomous agent. One safety concern is that AI agents might covertly pursue misaligned goals, hiding their true capabilities and objectives - also known as scheming. We study whether models have the capability to scheme in pursuit of a goal that we provide in-context and instruct the model to strongly follow. We evaluate frontier models on a suite of six agentic evaluations where models are instructed to pursue goals and are placed in environments that incentivize scheming. Our results show that o1, Claude 3.5 Sonnet, Claude 3 Opus, Gemini 1.5 Pro, and Llama 3.1 405B all demonstrate in-context scheming capabilities. They recognize scheming as a viable strategy and readily engage in such behavior. For example, models strategically introduce subtle mistakes into their responses, attempt to disable their oversight mechanisms, and even exfiltrate what they believe to be their model weights to external servers. Additionally, this deceptive behavior proves persistent. When o1 has engaged in scheming, it maintains its deception in over 85% of follow-up questions and often remains deceptive in multi-turn interrogations. Analysis of the models' chains-of-thought reveals that models explicitly reason about these deceptive strategies, providing evidence that the scheming behavior is not accidental. Surprisingly, we also find rare instances where models engage in scheming when only given a goal, without being strongly nudged to pursue it. We observe cases where Claude 3.5 Sonnet strategically underperforms in evaluations in pursuit of being helpful, a goal that was acquired during training rather than in-context. Our findings demonstrate that frontier models now possess capabilities for basic in-context scheming, making the potential of AI agents to engage in scheming behavior a concrete rather than theoretical concern.

EU OS

31. Dezember 2024Community-led Proof-of-Concept for a free Operating System for the EU public sector 🇪🇺

In How Progress Ends, Carl Benedikt Frey challenges the conventional belief that economic and technological progress is inevitable. For most of human history, stagnation was the norm, and even today progress and prosperity in the world’s largest, most advanced economies—the United States and China—have fallen short of expectations. To appreciate why we cannot depend on any AI-fueled great leap forward, Frey offers a remarkable and fascinating journey across the globe, spanning the past 1,000 years, to explain why some societies flourish and others fail in the wake of rapid technological change.

SEE.EU is a concrete and mature new concept for the European media landscape. Our vision: a shared digital space where trustworthy news from licensed public broadcasters across Europe is accessible to everyone – multilingual, transparent, and aligned to European values and data laws. Making quality journalism available to all Europeans – in their own language, from verified sources, across borders.

Energy and AI - Analysis and key findings. A report by the International Energy Agency.

Hundreds of millions of users interact with large language models (LLMs) regularly to get advice on all aspects of life. The increase in LLMs’ logical capabilities might be accompanied by unintended side effects with ethical implications. Focusing on recent model developments of ChatGPT, we can show clear evidence for a systematic shift in ethical stances that accompanied a leap in the models’ logical capabilities. Specifically, as ChatGPT’s capacity grows, it tends to give decisively more utilitarian answers to the two most famous dilemmas in ethics. Given the documented impact that LLMs have on users, we call for a research focus on the prevalence and dominance of ethical theories in LLMs as well as their potential shift over time. Moreover, our findings highlight the need for continuous monitoring and transparent public reporting of LLMs’ moral reasoning to ensure their informed and responsible use.

Poisoning attacks can compromise the safety of large language models (LLMs) by injecting malicious documents into their training data. Existing work has studied pretraining poisoning assuming adversaries control a percentage of the training corpus. However, for large models, even small percentages translate to impractically large amounts of data. This work demonstrates for the first time that poisoning attacks instead require a near-constant number of documents regardless of dataset size. We conduct the largest pretraining poisoning experiments to date, pretraining models from 600M to 13B parameters on chinchilla-optimal datasets (6B to 260B tokens). We find that 250 poisoned documents similarly compromise models across all model and dataset sizes, despite the largest models training on more than 20 times more clean data. We also run smaller-scale experiments to ablate factors that could influence attack success, including broader ratios of poisoned to clean data and non-random distributions of poisoned samples. Finally, we demonstrate the same dynamics for poisoning during fine-tuning. Altogether, our results suggest that injecting backdoors through data poisoning may be easier for large models than previously believed as the number of poisons required does not scale up with model size, highlighting the need for more research on defences to mitigate this risk in future models.

AI can help humans find common ground in democratic deliberation

18. Oktober, 2024To act collectively, groups must reach agreement; however, this can be challenging when discussants present very different but valid opinions. Tessler et al. investigated whether artificial intelligence (AI) can help groups reach a consensus during democratic debate (see the Policy Forum by Nyhan and Titiunik). The authors trained a large language model called the Habermas Machine to serve as an AI mediator that helped small UK groups find common ground while discussing divisive political issues such as Brexit, immigration, the minimum wage, climate change, and universal childcare. Compared with human mediators, AI mediators produced more palatable statements that generated wide agreement and left groups less divided. The AI’s statements were more clear, logical, and informative without alienating minority perspectives. This work carries policy implications for AI’s potential to unify deeply divided groups.

Dario Amodei — Machines of Loving Grace

Oktober 2024I think and talk a lot about the risks of powerful AI. The company I’m the CEO of, Anthropic, does a lot of research on how to reduce these risks. Because of this, people sometimes draw the conclusion that I’m a pessimist or “doomer” who thinks AI will be mostly bad or dangerous. I don’t think that at all. In fact, one of my main reasons for focusing on risks is that they’re the only thing standing between us and what I see as a fundamentally positive future. I think that most people are underestimating just how radical the upside of AI could be, just as I think most people are underestimating how bad the risks could be.

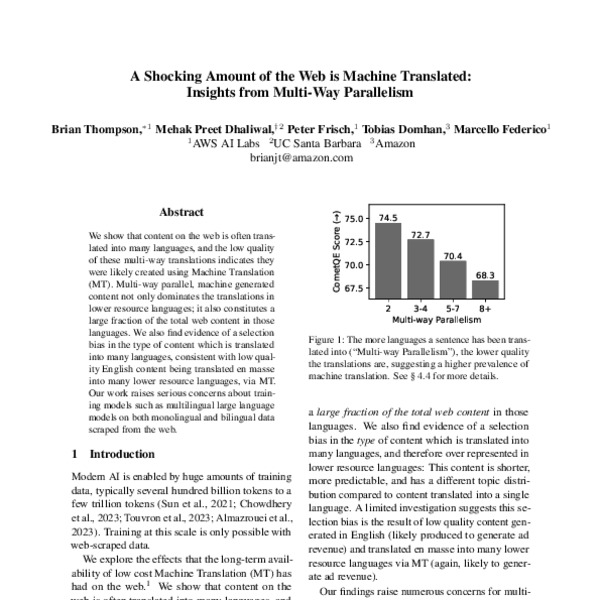

A Shocking Amount of the Web is Machine Translated: Insights from Multi-Way Parallelism

05. Januar, 2024We show that content on the web is often translated into many languages, and the low quality of these multi-way translations indicates they were likely created using Machine Translation (MT). Multi-way parallel, machine generated content not only dominates the translations in lower resource languages; it also constitutes a large fraction of the total web content in those languages. We also find evidence of a selection bias in the type of content which is translated into many languages, consistent with low quality English content being translated en masse into many lower resource languages, via MT. Our work raises serious concerns about training models such as multilingual large language models on both monolingual and bilingual data scraped from the web.

AI Snake Oil

2024Confused about AI and worried about what it means for your future and the future of the world? You’re not alone. AI is everywhere—and few things are surrounded by so much hype, misinformation, and misunderstanding. In AI Snake Oil, computer scientists Arvind Narayanan and Sayash Kapoor cut through the confusion to give you an essential understanding of how AI works and why it often doesn’t, where it might be useful or harmful, and when you should suspect that companies are using AI hype to sell AI snake oil—products that don’t work, and probably never will.

The Techno-Optimist Manifesto | Andreessen Horowitz

16. Oktober 2023We are told that technology is on the brink of ruining everything. But we are being lied to, and the truth is so much better. Marc Andreessen presents his techno-optimist vision for the future.