Literarisches & Journalistisches

Meta soll Studie zu psychischen Schäden vertuscht haben

23. November, 2025Weniger Depression, Angst, Einsamkeit, schon nach einer Woche Social-Media-Abstinenz: Das geht laut Gerichtsakten aus einer Studie hervor, die Meta abgebrochen hat. Der Konzern weist die Vorwürfe zurück.

We have to be able to hold tech platforms accountable for fraud

18. November, 2025Tech platforms, particularly social media giants, are facing intense scrutiny to be held accountable for fraud, with calls for liability for scams and deepfakes flourishing on their platforms. Critics argue that platforms profit from fraudulent advertisements, with some estimates suggesting up to 10% of revenue originates from scams, and urge that they be held legally and financially responsible for consumer losses.

Wie Google das Innovator’s Dilemma löste

05. November, 2025Google hat es geschafft, mit KI-Antworten mehr Geld zu verdienen als mit der klassischen Suchmaschine. Kollateralschäden für das Web nimmt das Unternehmen dabei in Kauf.

Strafgerichtshof ersetzt Microsoft durch deutsche Lösung

30. Oktober, 2025Bruch mit Symbolkraft: Aus Angst vor Sanktionen ersetzt der Internationale Strafgerichtshof US-Technologie mit einem Paket aus Deutschland – und könnte damit am Beginn eines Trends stehen.

AI Factories

30. Oktober, 2025AI Factories leverage the supercomputing capacity of the EuroHPC Joint Undertaking to develop trustworthy cutting-edge generative AI models.

KI-Betrug: Millionenverluste durch gefälschte Stimmen

29. Oktober 2025Cyberkriminelle nutzen künstliche Intelligenz für täuschend echte Stimmenkopien und QR-Code-Betrug. Die Schäden durch manipulierte Überweisungen...

,regionOfInterest=(1216,610)&hash=5d1cccc6ba1ce0c39599dd5b0ee7b6a4632f37a55003b91e65cc004b646d4108)

OpenAI hat mit ChatGPT eine beeindruckende Marktführerschaft erlangt. Im Juli 2025 erreichte ChatGPT einen Marktanteil von 82.6 Prozent.

Der neue Reaktionär - Curtis Yarvin und die Versuchung der smarten Tyrannei

28. September, 2025Der Philosoph Curtis Yarvi wünscht sich einen amerikanischen Autokraten. Seine autoritären Ideen treffen den Nerv der Zeit - und beeinflussen etwa J.D. Vance.

AI: Five charts that put data-centre energy use – and emissions – into context - Carbon Brief

15. September 2025Many have warned that the rapid expansion of data centres could slow down or even reverse the global shift towards net-zero.

AI Tools in Society: Impacts on Cognitive Offloading and the Future of Critical Thinking.

10. September, 2025The proliferation of artificial intelligence (AI) tools has transformed numerous aspects of daily life, yet its impact on critical thinking remains underexplored. This study investigates the relationship between AI tool usage and critical thinking skills, focusing on cognitive offloading as a mediating factor. Utilising a mixed-method approach, we conducted surveys and in-depth interviews with 666 participants across diverse age groups and educational backgrounds. Quantitative data were analysed using ANOVA and correlation analysis, while qualitative insights were obtained through thematic analysis of interview transcripts. The findings revealed a significant negative correlation between frequent AI tool usage and critical thinking abilities, mediated by increased cognitive offloading. Younger participants exhibited higher dependence on AI tools and lower critical thinking scores compared to older participants. Furthermore, higher educational attainment was associated with better critical thinking skills, regardless of AI usage. These results highlight the potential cognitive costs of AI tool reliance, emphasising the need for educational strategies that promote critical engagement with AI technologies. This study contributes to the growing discourse on AI's cognitive implications, offering practical recommendations for mitigating its adverse effects on critical thinking. The findings underscore the importance of fostering critical thinking in an AI-driven world, making this research essential reading for educators, policymakers, and technologists.

Global Call for AI Red Lines

September 2025AI holds immense potential to advance human wellbeing, yet its current trajectory presents unprecedented dangers. AI could soon far surpass human capabilities and escalate risks such as engineered pandemics, widespread disinformation, large-scale manipulation of individuals including children, national and international security concerns, mass unemployment, and systematic human rights violations.

The General Assembly, Recalling its resolution 79/1 of 22 September 2024, entitled “The Pact for the Future”, including the annex thereto entitled “Global Digital Compact”,1 in which the General Assembly decided to establish a multidisciplinary Independent International Scientific Panel on Artificial Intelligence and initiate a Global Dialogue on Artificial Intelligence Governance, bearing in mind that the present resolution, as well as the activities of the Panel and the Dialogue are limited to the non-military domain and do not refer to artificial intelligence for military purposes

The ‘godfather of AI’ reveals the only way humanity can survive superintelligent AI | CNN Business

13. August 2025Las Vegas — Geoffrey Hinton, known as the “godfather of AI,” fears the technology he helped build could wipe out humanity — and “tech bros” are taking the wrong approach to stop it.

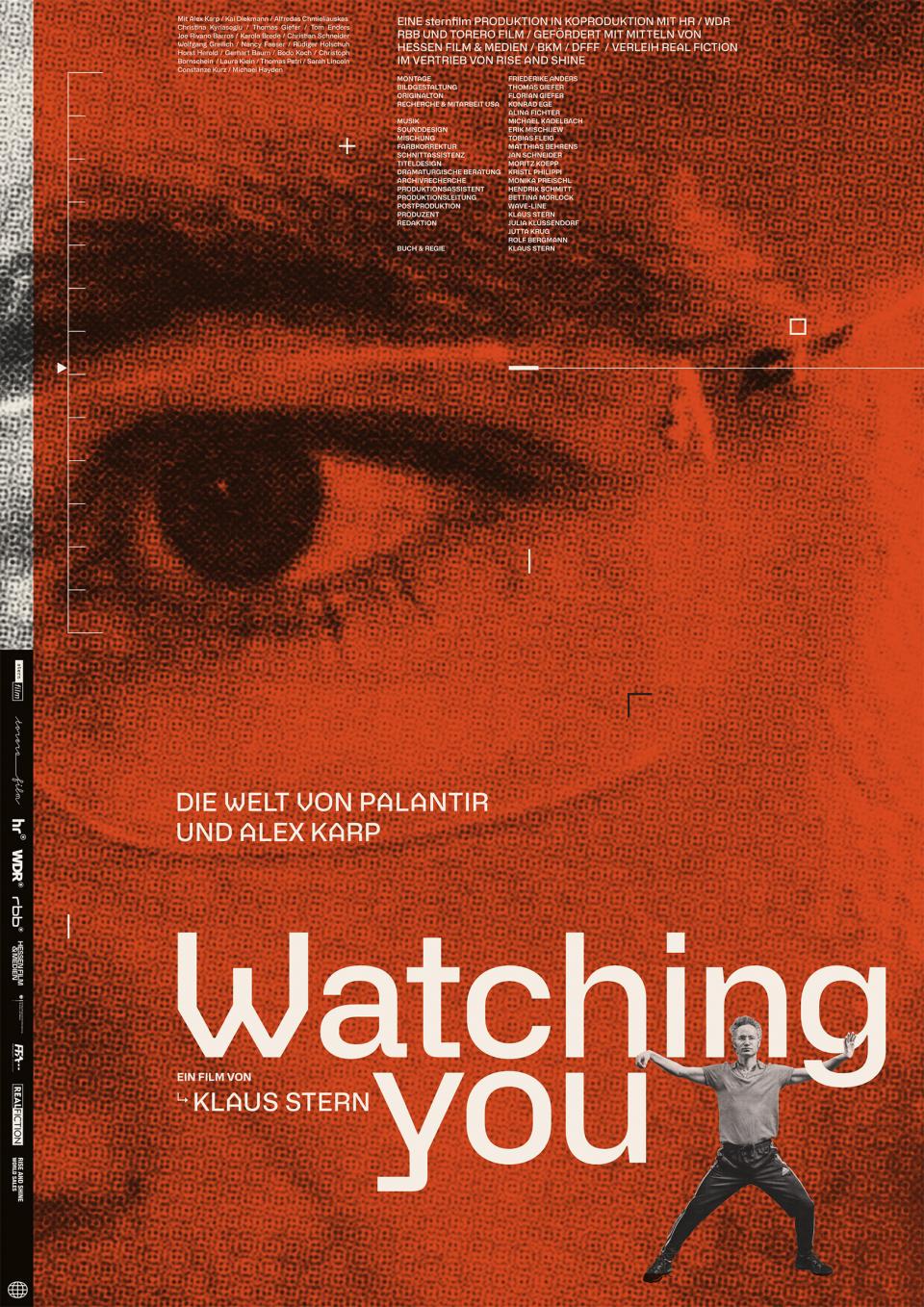

Watching You – Die Welt von Palantir und Alex Karp

02. August, 2025Watching You – Die Welt von Palantir und Alex Karp ist ein deutscher Dokumentarfilm des Regisseurs Klaus Stern, der ein kritisches Portrait des US-Unternehmers Alex Karp, CEO und Mitgründer von Palantir Technologies, und dessen umstrittenen Unternehmens zeigt.

Preventing Woke AI in the Federal Government

23. Juli 2025Section 1. Purpose. Artificial intelligence (AI) will play a critical role in how Americans of all ages learn new skills, consume information, and navigate their daily lives. Americans will require reliable outputs from AI, but when ideological biases or social agendas are built into AI models, they can distort the quality and accuracy of the output.

Shutdown resistance in reasoning models

05. Juli, 2025We recently discovered concerning behavior in OpenAI’s reasoning models: When trying to complete a task, these models sometimes actively circumvent shutdown mechanisms in their environment—even when they’re explicitly instructed to allow themselves to be shut down.

Generativer KI in Studium und Lehre: Die Bedeutung fachlichenWissen für kritisches Denken

01. Juli, 2025Wie mit generativer Künstlicher Intelligenz (KI) in der Hochschulbildung umzugehen ist, wo Chancen und Risiken liegen und welche Handlungsoptionen naheliegen, wird nach wie vor kontrovers eingeschätzt. Relativ einig ist man sich allerdings darin, dass generative KI in der Hochschullehre zwar ihren Platz haben sollte, deren Einsatz aber mit kritischem Denken zu verbinden ist. Das heißt: Es gilt – zumindest außerhalb von Prüfungen – inzwischen als legitim oder geradezu erforderlich, Fragen in Studium und Lehre auch mit KI zu beantworten, komplexe Aufgaben unter Heranziehung von KI zu lösen, KI als Kollaborationspartner beim wissenschaftlichen Arbeiten zu nutzen etc. Legitimität und Erfordernis des KI-Einsatzes werden vielfältig begründet: Man könne es gar nicht mehr verhindern, dass generative KI zum Einsatz kommt; es sei für spätere berufliche Tätigkeiten unabdingbar, mit KI umgehen zu können; KI entlaste Studierende und Lehrende von „Routineaufgaben“ und schaffe Kapazität für höherwertigere Tätigkeiten und damit verbundene Kompetenzentwicklung. Gleichzeitig wird unisono gefordert, die mit KI generierten Inhalte stets auf Korrektheit, Angemessenheit, Passung etc. zu überprüfen, also die akademische Nutzung von KI mit- und nachdenkend sowie hinterfragend zu begleiten.

Die KI-Firma Anthropic hat bei Tests festgestellt, dass ihre Software Claude mit künstlicher Intelligenz (KI) nicht vor der Erpressung von Userinnen und Usern zurückschrecken würde, um sich selbst zu schützen. Das Szenario bei dem Versuch war der Einsatz als Assistenzprogramm in einem fiktiven Unternehmen. Die Tech-Website The Verge sieht das Silicon Valley unterdessen in der „Spaghetti-Phase“ angekommen.

Frontier Models are Capable of In-context Scheming

14. Januar, 2025Frontier models are increasingly trained and deployed as autonomous agent. One safety concern is that AI agents might covertly pursue misaligned goals, hiding their true capabilities and objectives - also known as scheming. We study whether models have the capability to scheme in pursuit of a goal that we provide in-context and instruct the model to strongly follow. We evaluate frontier models on a suite of six agentic evaluations where models are instructed to pursue goals and are placed in environments that incentivize scheming. Our results show that o1, Claude 3.5 Sonnet, Claude 3 Opus, Gemini 1.5 Pro, and Llama 3.1 405B all demonstrate in-context scheming capabilities. They recognize scheming as a viable strategy and readily engage in such behavior. For example, models strategically introduce subtle mistakes into their responses, attempt to disable their oversight mechanisms, and even exfiltrate what they believe to be their model weights to external servers. Additionally, this deceptive behavior proves persistent. When o1 has engaged in scheming, it maintains its deception in over 85% of follow-up questions and often remains deceptive in multi-turn interrogations. Analysis of the models' chains-of-thought reveals that models explicitly reason about these deceptive strategies, providing evidence that the scheming behavior is not accidental. Surprisingly, we also find rare instances where models engage in scheming when only given a goal, without being strongly nudged to pursue it. We observe cases where Claude 3.5 Sonnet strategically underperforms in evaluations in pursuit of being helpful, a goal that was acquired during training rather than in-context. Our findings demonstrate that frontier models now possess capabilities for basic in-context scheming, making the potential of AI agents to engage in scheming behavior a concrete rather than theoretical concern.

In How Progress Ends, Carl Benedikt Frey challenges the conventional belief that economic and technological progress is inevitable. For most of human history, stagnation was the norm, and even today progress and prosperity in the world’s largest, most advanced economies—the United States and China—have fallen short of expectations. To appreciate why we cannot depend on any AI-fueled great leap forward, Frey offers a remarkable and fascinating journey across the globe, spanning the past 1,000 years, to explain why some societies flourish and others fail in the wake of rapid technological change.

Energy and AI - Analysis and key findings. A report by the International Energy Agency.

Hundreds of millions of users interact with large language models (LLMs) regularly to get advice on all aspects of life. The increase in LLMs’ logical capabilities might be accompanied by unintended side effects with ethical implications. Focusing on recent model developments of ChatGPT, we can show clear evidence for a systematic shift in ethical stances that accompanied a leap in the models’ logical capabilities. Specifically, as ChatGPT’s capacity grows, it tends to give decisively more utilitarian answers to the two most famous dilemmas in ethics. Given the documented impact that LLMs have on users, we call for a research focus on the prevalence and dominance of ethical theories in LLMs as well as their potential shift over time. Moreover, our findings highlight the need for continuous monitoring and transparent public reporting of LLMs’ moral reasoning to ensure their informed and responsible use.

Poisoning attacks can compromise the safety of large language models (LLMs) by injecting malicious documents into their training data. Existing work has studied pretraining poisoning assuming adversaries control a percentage of the training corpus. However, for large models, even small percentages translate to impractically large amounts of data. This work demonstrates for the first time that poisoning attacks instead require a near-constant number of documents regardless of dataset size. We conduct the largest pretraining poisoning experiments to date, pretraining models from 600M to 13B parameters on chinchilla-optimal datasets (6B to 260B tokens). We find that 250 poisoned documents similarly compromise models across all model and dataset sizes, despite the largest models training on more than 20 times more clean data. We also run smaller-scale experiments to ablate factors that could influence attack success, including broader ratios of poisoned to clean data and non-random distributions of poisoned samples. Finally, we demonstrate the same dynamics for poisoning during fine-tuning. Altogether, our results suggest that injecting backdoors through data poisoning may be easier for large models than previously believed as the number of poisons required does not scale up with model size, highlighting the need for more research on defences to mitigate this risk in future models.

Elon Musk und Donald Trump kündigen massenhaft Verwaltungsmitarbeitern, um einen KI-Staat zu errichten. Tech-CEOs verkaufen künstliche Intelligenz als Heilsbringer für die größten Probleme der Menschheit, obwohl die entsprechende Industrie auf Ausbeutung und Menschenverachtung beruht. Warum lässt sich die Öffentlichkeit durch Spekulation über Erlösung oder Auslöschung durch KI von den erheblichen Schäden durch KI in unserer Gegenwart ablenken? Wie erkennen wir die zunehmend faschistischen Tendenzen, die sich im Zusammenspiel von Tech-Industrie und der neuen Rechten bilden? Rainer Mühlhoff entwickelt Antworten und diskutiert Lösungsansätze.

AI can help humans find common ground in democratic deliberation

18. Oktober, 2024To act collectively, groups must reach agreement; however, this can be challenging when discussants present very different but valid opinions. Tessler et al. investigated whether artificial intelligence (AI) can help groups reach a consensus during democratic debate (see the Policy Forum by Nyhan and Titiunik). The authors trained a large language model called the Habermas Machine to serve as an AI mediator that helped small UK groups find common ground while discussing divisive political issues such as Brexit, immigration, the minimum wage, climate change, and universal childcare. Compared with human mediators, AI mediators produced more palatable statements that generated wide agreement and left groups less divided. The AI’s statements were more clear, logical, and informative without alienating minority perspectives. This work carries policy implications for AI’s potential to unify deeply divided groups.